Thales has created a new tool for flight simulator instructors, allowing them to better understand a pilot’s cognitive state — thus enabling more effective training sessions.

Designed as an option for Reality H simulators, the HuMans system makes the most of recent advancements in eye-tracking and neuroscience. “Sitting behind the pilot, the instructor was missing what the pilot is looking at and how he comprehends the situation,” said Benoit Plantier, CEO, Thales Training & Simulation (TTS).

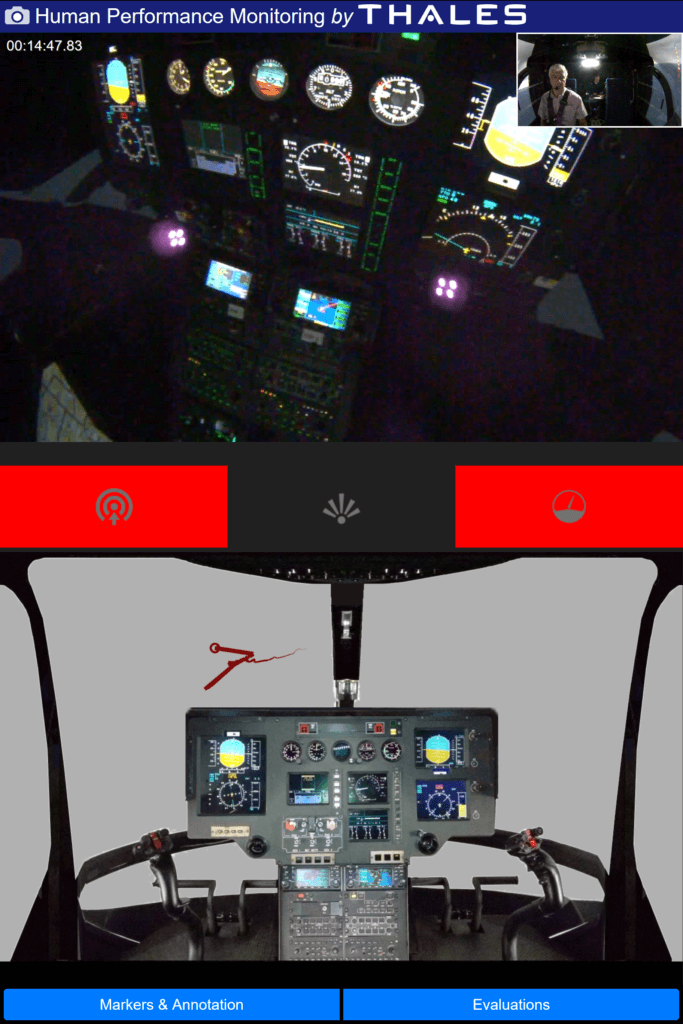

HuMans uses an eye-tracking system where cameras and accompanying image analysis software follow pupil movement. The cameras are located on the instrument panel and over the pilot’s head. For the instructor, the interface is a tablet. The combination opens a world of possibilities.

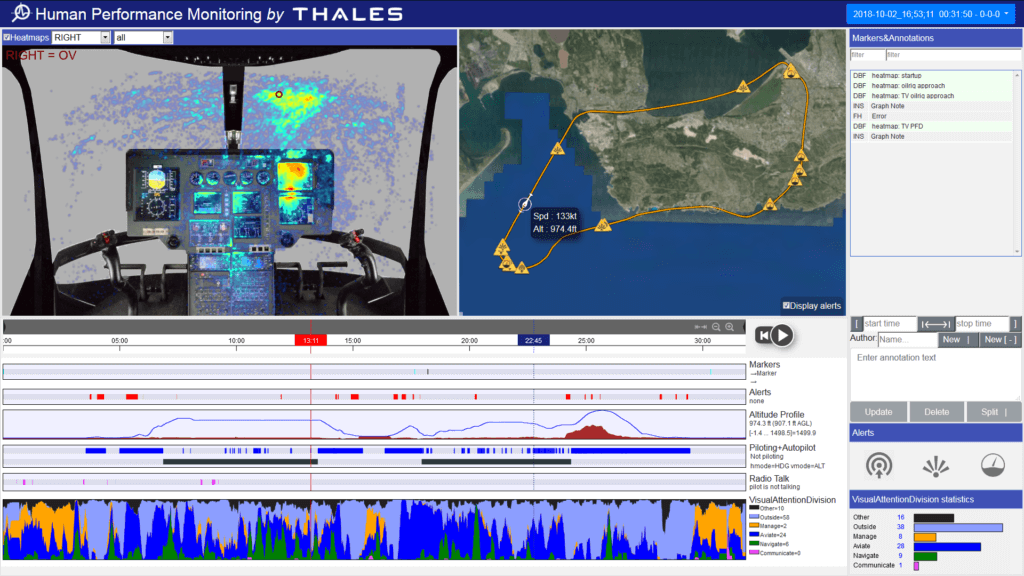

The instructor may simply look at the pilot’s face or the instruments. Or he can follow a dot located at the focus of the pilot’s gaze. As that focus is shown for the last few seconds (that duration being adjustable), the instructor can see a line representing recent focus.

Three alarms draw the instructor’s attention to particular problems, said Christophe Bruzy, TTS’s technical director. The first one is tunnel vision — excessive focus on an instrument. The second alarm triggers when the pilot has not looked “outside” for too long a period of time. Finally, another alarm indicates too little time spent looking at instruments. Pointing out such issues helps the student progress in situational awareness.

For an instructor, the tools can provide a clear idea of how comfortable the student is. If the student is both successful and relaxed, the instructor can inject more difficulties in the scenario, better exploiting training time. Or, if the mission proves too difficult, the instructor can adapt it to the trainee’s capabilities.

After the training session, the instructor can see a “heat map” of the pilot’s gaze. The map integrates the focus of the pilot’s eyes over an adjustable period of time. Depending on the time the pilot spent looking at a given area, the area appears in different colors. The heat map is so precise that it draws the shape of the various displays.

The technology also allows for a portion of the “flight” to be replayed. The instructor just selects a particular location (picked on the map) or time reference on which to concentrate, allowing their comments to be contextualized. For example, they may tell the trainee “you were looking at the right/wrong instrument” at a precise moment during the flight. It could have been when flying over an obstacle, during the approach, or at the beginning of a rain shower.

Thales hopes these new capabilities will improve training by making it “evidence-based.” The instructor can factually measure student progress, Bruzy emphasized. The trainee’s methods — such as his or her visual pattern — can be validated, and they can see their own progress in an objective manner.

It took Thales five years of research and development to develop HuMans. “We have benefitted from 15 years of academic research and we have collaborated for five-to-six years with laboratories,” said Bruzy. The company made the most of advanced studies performed at France’s national research institute, CNRS, in cognitive science and mathematics. The IRBA armed forces biomedical research institute also helped in human factor analysis.

Non-intrusive eye tracking (where the pilot is not required to wear a device) helped develop HuMans. Another contributing technology was artificial intelligence. The massive amount of data collected during a training session — 70 gigabytes, taking video into account — has to be made “intelligible for a human being,” said Bruzy. “You have to offer digested data to the instructor.”

The product was developed with instructors to factor in the user. Thanks to that “user experience” approach, HuMans incorporates fewer indicators than if it had been developed only by engineers. “The tool can be mastered in one hour,” said Etienne Chevreau, TTS’ head of strategy and marketing.

Similarly, more sensors were used during development for respiratory and heart rates, as well as an encephalogram. They were used to create models but, to keep the installation straightforward, only the eye-tracking system was retained in the product.

In future, however, non-intrusive equipment may be introduced to monitor the trainee’s heart rate. Measuring rate variation and inter-beat duration may be useful. From those and other — unspecified — parameters, the mental workload could be inferred, Bruzy explained. A four-level bar, representing the student’s mental workload from low to very busy, could then be shown to the instructor. For a complex mission, mental workload has to be kept below a certain threshold, Plantier pointed out.

“At the center of the training process is now the human being, with a behavior that can induce dangerous situations [and can therefore be corrected],” said Bruzy. Technical and behavioral skills are continuously evaluated, using facts. The new tool was devised to help the instructor meet teaching goals, Bruzy stressed.

In the future, when enough Reality H simulators are in service with the HuMans option, Thales hopes to be able to put a number on time savings the tool provides in training.